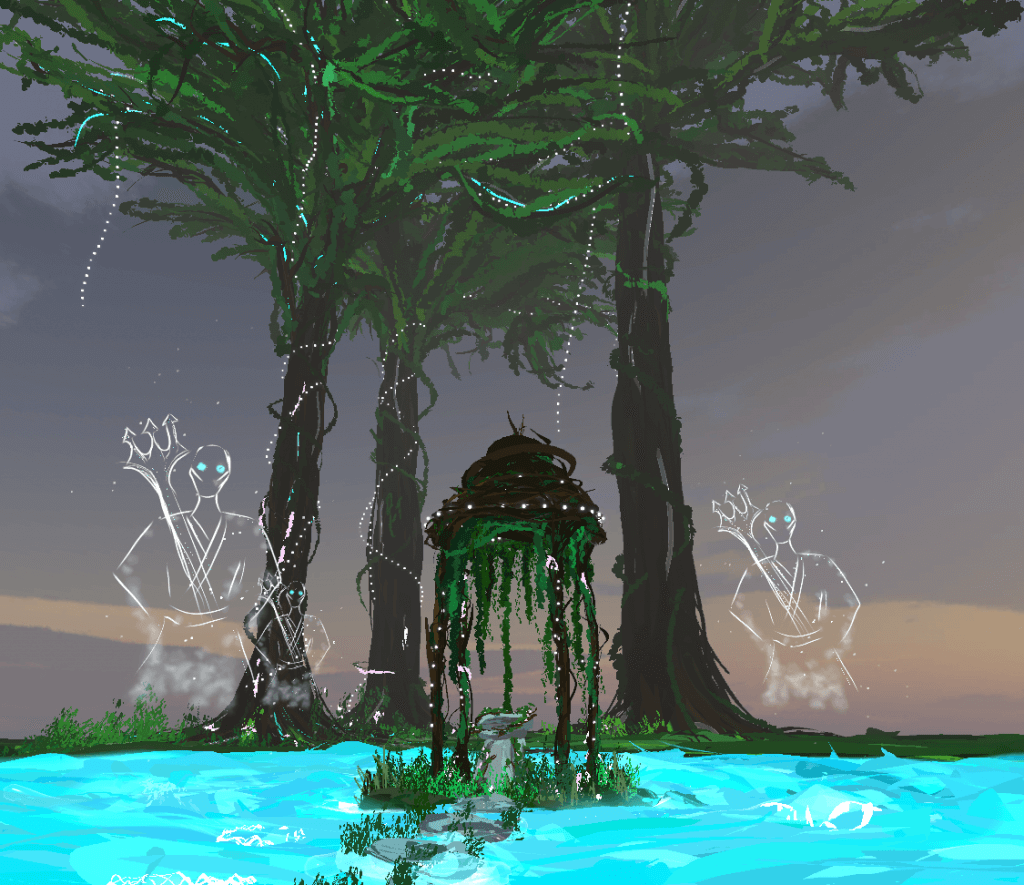

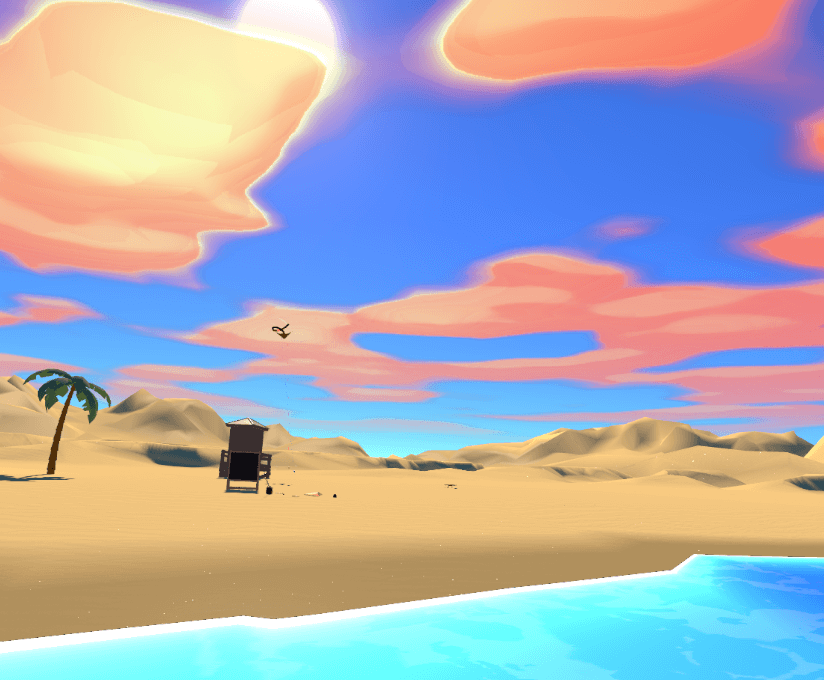

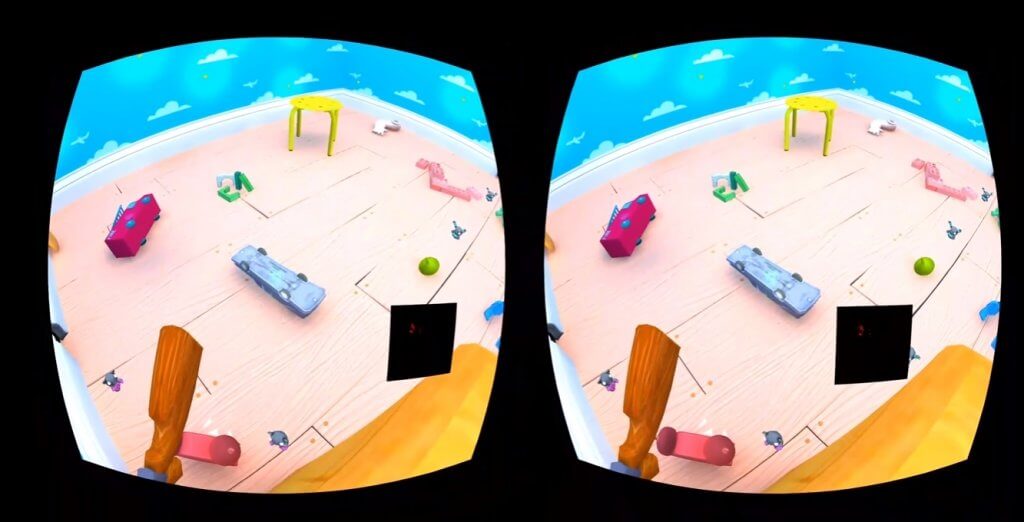

By now you should have realised that interaction within Virtual Reality is my biggest turn-on. After playing with DanceMats, Blink detection, custom controllers, etc… there was still one key missing experiment to do: Balance control.

The Wii Balance Board is an inexpensive (£10), obsolete and reliable piece of hardware. With two pressure plates per foot (toe and heel) it can measure with precision the balance of the user and share it over Bluetooth. It has been used in a few experimental VR games in the past, but I still wanted to give it a go and try to design around the problems in a different way.